INTRODUCTION

They say that a few words could bring down entire empires, start a war or cause a revolution. We speak to express. Voice has been the primary medium of communication between humans.

Wouldn’t it be wonderful if you could speak to the web pages on your screen and tell them what to do and they in turn would respond to your instructions. Imagine the power that voice recognition could bring to modern web applications. From filling forms, searching using your voice, replying to your mail, chatting while playing an online game, or just plain navigational controls to telling web sites to show you your favourite movie or play your favourite song, the possibilities are truly endless.

Voice recognition has been around for quite some time with Native Operating Systems as well as with smartphones and other smart devices. Google Now, Siri or Cortana are widely used by the common man in mobile devices today. Be it high performance fighter aircraft terminals, VOIP telephony, gaming or handsfree computing, speech recognition has already played significant roles.

However, the web hasn’t seen major adoption or usage of voice recognition or voice controlled commands. This has been vastly due to the unavailability of any good speech recognition capabilities of the browsers as well as due to the lack of initial support from HTML regarding the same. Therefore speech recognition is yet to be popular as a medium of interaction between the user and the web.

But Google Chrome rolled out a speech recognition engine bundled with its browser in it’s version 25. So you can now invite users to talk to your web applications and process their speech in various languages recognised around the world.

WHY ANNYANG?

The library that we are going to use today is known as “annyang” written by a well known open source contributor named Tal Ater.

The reason we chose this library is primarily due to the following facts:

Uses the highly accurate speech recognition engine provided

by Chrome.

- It’s pretty lightweight (just 2kb when minified).

- Progressively enhances browsers that support speech recognition,

while leaving users with older browsers unaffected.

- No external dependencies are required.

- Can be easily integrated with Speech KITT, A flexible GUI

for interacting with Speech Recognition (Also written by

Tal Ater).

- It’s free to use!

GETTING STARTED

There’s a pretty self explanatory demo page at the official site of Annyang (http://dgit.in/annyang) which shows integrations with the flickr APIs. It’s a must visit for beginners starting with annyang. The instructions to get started are quite simple.

<script src=”//cdnjs.cloudflare.com/ajax/libs/annyang/2.5.0/

annyang.min.js”></script>

<script>

if (annyang) {

//If supported in the browser..

When your speech recognising application is ready to talk to you

Let’s define our first command– First the text we expect, and then the function it should call

var commands ={‘Say hi to Eliza’: function() {alert(‘Hi Eliza’);}};

Add our commands to annyang

annyang.addCommands(commands);

Start listening. You can call this here, or attach this call to

an event, button, etc.

annyang.start();} </script>

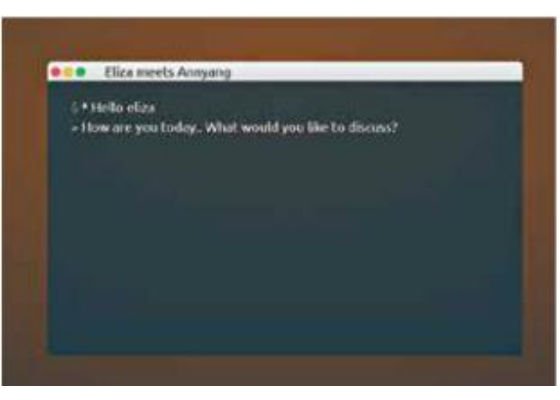

ANNYANG MEETS ELIZA

We are going to build a small demo with the javascript representation of one of the first chat-bots/natural language processors in history, known to the developer fraternity as ELIZA.

ELIZA is a computer program that emulates a Rogerian psychotherapist and was so popular at a time that many people thought it to be a human. We shall add a twist to the original ELIZA program by interacting with it via voice instead of the conventional typed interaction.

(The full project can be forked from http://dgit.in/ElzaAnyng) Note: We shall not discuss the complete creation of the ELIZA using javascript as it is readily available on the web due to a few wonderful developers like George Dunlop.

STEP BY STEP

Step 1: Add annyang as mentioned previously.

Step 2: Add the .js file for ELIZA

<script src=”eliza.js”>

</script>

Step 3: Make sure annyang is supported

if (annyang) {

//If supported in the browser

// Our code to interact with annyang…}

else{//Do something for unsupported browsers.}

In our case we shall show an input box to still allow interactions with ELIZA in non-supported browsers.

Step 4: Define our command(s)

// * The key is the phrase you want your users to say.

// * The value is the action to do.

Eliza in action via Annyang

// You can pass a function, a function name (as a string), or

// write your function as part of the commands object.

var commands = {‘*tag’: sayToEliza,

}; // *tag is a splat which gets

// passed as argument to function defined in the value

The commands can be in the form of named variables, splats, and optional words or phrases.

var commands = {

// annyang will capture anything after a splat (*) and pass

// it to the function e.g. saying “Find a Dog and a Cat” is the

// same as calling function1(‘Dog and a Cat’);

‘find a *tag’: function1,

// A named variable is a one word variable, that can fit

// anywhere in your command.

// e.g. saying “ calculate February profits “ will call

// calculateProfits(‘ February ‘);

‘calculate :month stats’: calculateProfits,

// By defining a part of the following command as optional,

// annyang will respond to both: “say hello to my little friend”

// as well as “say hello friend”

‘say hello (to my little) friend’: greeting};

var function1=function(tag){//Do Something};

var calculateProfits =function(month){//Do Something};

var greeting =function(){//Do Something};

Step 5: Define our functions that will run when a command is matched

var sayToEliza = function(tag) {dialog(tag);};

function dialog() is defined in our eliza.js (See Step 8)

Step 6: Pass the commands to annyang

// Add voice commands to respond to

annyang.addCommands(commands);

Step 7: Start listening on Annyang

// You can call this within the body of the ‘if’

// block, or attach this call to an event,

// button, etc.

annyang.start();

Step 8: Pass the best matched command to Eliza to get a response via dialog() function

// Here the String passed by Annyang is // being used as ‘Input’ function dialog(Input) { chatter[chatpoint] = “ * “ + Input; elizaresponse = listen(Input); setTimeout(“think()”, 500); chatpoint++; if (chatpoint >= chatmax) { chatpoint = 0;} return write();}

And bingo! We are now set to go!

IMPORTANT:

Allow microphone access to ‘annyang’

from your browser.

- It is advised to use Google Chrome (Windows)

/ Chromium (Linux) to unveil the

full potential of the annyang Library.

Tip: Try http://dgit.in/TAterGH for ready

to use UI elements with annyang

ANNYANG API REFERENCE:

Here are some of the commands that you can use to expand beyond the basic project explained here.

init(commands, [resetCommands=true])

Initialize annyang with a list of commands

to recognize.

- start([options])

Start listening. Options: autoRestart,

continuous, paused

- abort()

Stop listening, and turn off mic.

- pause()

Pause listening.

- resume()

Resumes listening and restores command callback execution

setLanguage(language)

Set the language the

user will speak in,defaults

to ‘en-US’

- addCommands(commands)

Add commands that annyang will

respond to.

- removeCommands([commandsToRemo

ve])

Remove existing commands.

- trigger(string|array)

Simulate speech being recognized.

- isListening()

Returns true if speech recognition is currently

on

- getSpeechRecognizer()

Returns the instance of the browser’s

SpeechRecognition object

- addCallback(type, callback, [context])

For events, see Full API docs

- removeCallback(type, callback)

Remove Callback events

Find the full API docs at http://dgit.in/AnyngAPI

THE HTML5 WAY

Annyang internally uses the HTML5 Speech Recognition API. You can choose not to use annyang and write it by using the native JS API provided by HTML5 as well. To do so, all you need to do is create a ‘webkitSpeechRecognition()’ and use the ‘onresult’ of the same.

var recognition = new webkitSpeechRecognition();

recognition.onresult =

function(event) {

console.log(event) //or Do something else

}

recognition.start();

This in turn asks the user for allowing access to the microphone. Once turned ON, the user can start talking into the microphone. After the user finishes, the Speech Recognition API will fire the ‘onresult’ event and make the results of the recognised speech available as a JavaScript object.

Set a language

You can set a language or dialect from the vast number of available languages.

available languages.

var recognition = new webkitSpeechRecognition();

recognition.lang = “en-GB”;

What about OTHER browsers?

Support for other browsers is almost non-existent for speech recognition. However, with HTML5 we can expect more and more advances in the field of speech recognition.

The HTML5 Speech Recognition API, defined as an experimental one by Mozilla’s MDN, allows JavaScript to have access to a browser’s audio stream and convert it to text. Needless to say that microphone access is mandatory. If your site is on ‘HTTPS’ then your browser remembers these permission settings explicitly

The other JS libraries, like PocketSphinx (http://dgit.in/PcktSphnx) works on Firefox, Edge as well as Chrome. However, the accuracy of these are not as high as Annyang. But the very existence of these gives us hope that speech recognition will soon become an integral part of web applications and will be supported extensively by other browsers.

Seurity

Pages served on HTTP requires permission each time they want to make an audio capture in a similar way to requesting access to other items via the browser. Pages on HTTPS do not have to repeatedly request access and the browser remembers the access provided to it.

The Chrome API interacts with Google’s Speech Recognition API so all of the data is going via Google and whoever else might be listening . Also once the permission is granted, the application can keep listening until the browser window is closed or until it is explicitly stopped.

In the context of JavaScript, the situation is slightly different as the entire page has access to the output of the audio stream.

Hence when it comes to JavaScript you obviously have to handle the audio differently.

OTHER USES OF CHROME’s Web Speech API

Web Apps:

- Speechnotes – Speechnotes is a dictation platform that

has its own website and an Android app. The platform

comes with handy assists regarding insertion of punctuation

and capitalisation. And it works perfectly well offline.

http://dgit.in/SpchNotes

- Dictation – This one is also a dictation app that comes

with its own Chrome app. This was developed by Amit

Agarwal. The platform uses the x-webkit-speech attribute

of HTML5 that is only implemented in Google Chrome

http://dgit.in/Dictn

- Chrome Demo – This one’s the standard demo of the Web

Speech API on Google Chrome. http://dgit.in/ChrmDemo

SOME OTHER VOICE RECOGNITION JAVASCRIPT LIBRARIES

Artyom – Artyom.js is an useful wrapper of the speechSynthesis

and webkitSpeechRecognition APIs. Besides, artyom.

js also lets you to add voice commands to your website easily

too ! http://dgit.in/ArtyomJS

- PocketSphinx – Pocketsphinx.js is another speech recognition

library written entirely in JavaScript and running

entirely in the web browser. It does not require Flash or any

browser plug-in and does not do any server-side processing.

It makes use of Emscripten to convert PocketSphinx from

C, into JavaScript. Audio is recorded with the getUserMedia

JavaScript API and processed through the Web Audio API.

Credits

- Tal Ater – the author of the annyang library http://dgit.in/TalAter

- Mac Terminal CSS inspiration from codepen by http://dgit.in/DenCPen

- For the basic javascript of ELIZA – George Dunlop and http://dgit.in/PeccviCm